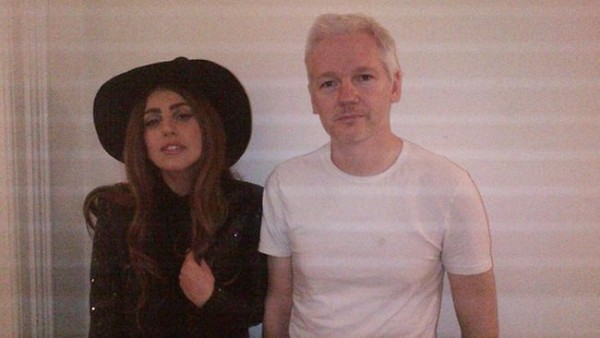

Not all whistleblowers are created equal. Julian Assange is, after all, no Daniel Ellsberg, even if the latter leaker supports him.

The WikiLeaks founder is nothing if not a Trump-ish world figure, having climbed onto that stage while our current President was still more Kim Kardashian than Kim Jong-un, busy weighing the relative merits of Arsenio Hall and Gary Busey in a make-believe boardroom.

Assange has repeatedly proven himself over the past seven years to have been deeply irresponsible with both the lives of innocent people–even at-risk ones–and the truth. Slavishly devoted to his own privacy despite having no regard for anyone else’s, he’s a vainglorious, egotistical asshole with a deep misogynistic streak and multiple sexual assault allegations on his public record. That doesn’t even take into account Assange apparently working as a Putin stooge over the last several years, with his organization becoming a Kremlin house organ far more effective than Pravda ever was during the Soviet days.

A question remains despite his odious behavior: Even if what Assange practices is some sort of voodoo journalism, will it endanger genuine practitioners if he’s arrested and tried for espionage? That inquiry was a lot more germane before Trump and hopefully will be again after him, since any U.S. reporter or news organization are targets of the White House’s wrath during this terrible time, no questionable practices required.

In writing about Risk, the Laura Poitras documentary about the world’s second-most-infamous Kremlin crony, Sue Halpern of the New York Review of Books wonders over this very issue. An excerpt:

Despite Assange’s vocal disdain for his former collaborators at The New York Times and The Guardian, his association with those journalists and their newspapers is probably what so far has kept him from being indicted and prosecuted in the United States. As Glenn Greenwald told the journalist Amy Goodman recently, Eric Holder’s Justice Department could not come up with a rationale to prosecute WikiLeaks that would not also implicate the news organizations with which it had worked; to do so, Greenwald said, would have been “too much of a threat to press freedom, even for the Obama administration.” The same cannot be said with confidence about the Trump White House, which perceives the Times, and national news organizations more generally, as adversaries. Yet if the Sessions Justice Department goes after Assange, it likely will be on the grounds that WikiLeaks is not “real” journalism.

This charge has dogged WikiLeaks from the start. For one thing, it doesn’t employ reporters or have subscribers. For another, it publishes irregularly and, because it does not actively chase secrets but aggregates those that others supply, often has long gaps when it publishes nothing at all. Perhaps most confusing to some observers, WikiLeaks’s rudimentary website doesn’t look anything like a New York Times or a Washington Post, even in those papers’ more recent digital incarnations.

Nonetheless, there is no doubt that WikiLeaks publishes the information it receives much like those traditional news outlets. When it burst on the scene in 2010, it was embraced as a new kind of journalism, one capable not only of speaking truth to power, but of outsmarting power and its institutional gatekeepers. And the fact is, there is no consensus on what constitutes “real” journalism. As Adam Penenberg points out, “The best we have comes from laws and proposed legislation which protect reporters from being forced to divulge confidential sources in court. In crafting those shield laws, legislators have had to grapple with the nebulousness of the profession.”

The danger of carving off WikiLeaks from the rest of journalism, as the attorney general may attempt to do, is that ultimately it leaves all publications vulnerable to prosecution. Once an exception is made, a rule will be too, and the rule in this case will be that the government can determine what constitutes real journalism and what does not, and which publications, films, writers, editors, and filmmakers are protected under the First Amendment, and which are not.

This is where censorship begins. No matter what one thinks of Julian Assange personally, or of WikiLeaks’s reckless publication practices, like it or not, they have become the litmus test of our commitment to free speech. If the government successfully prosecutes WikiLeaks for publishing classified information, why not, then, “the failed New York Times,” as the president likes to call it, or any news organization or journalist? It’s a slippery slope leading to a sheer cliff. That is the real risk being presented here, though Poitras doesn’t directly address it.•

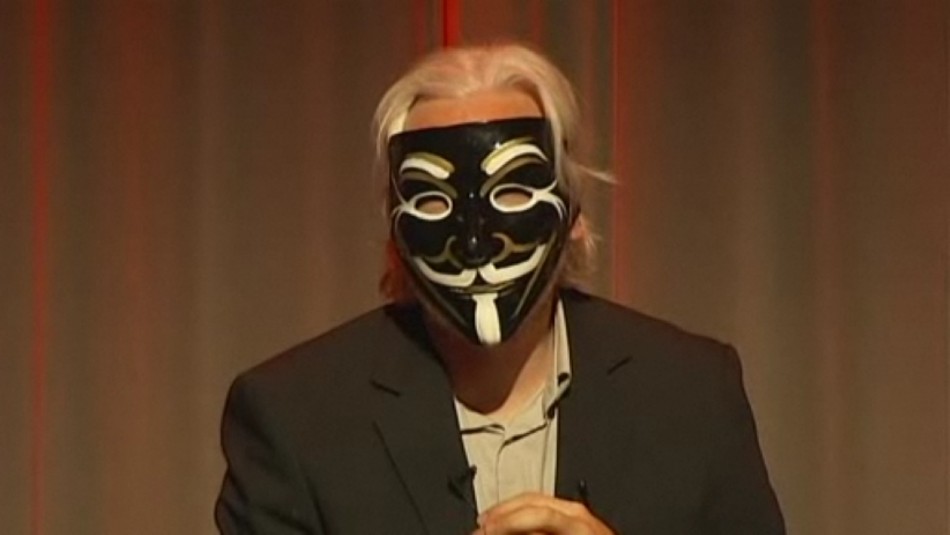

“This is not the film I thought I was making”: