________________________________

Russ Roberts:

Now, to be fair to AI and those who work on it, I think, I don’t know who, someone made the observation but it’s a thoughtful observation that any time we make progress–well, let me back up. People say, ‘Well, computers can do this now, but they’ll never be able to do xyz.’ Then, when they learn to do xyz, they say, ‘Well, of course. That’s just an easy problem. But they’ll never be able to do what you’ve just said’–say–‘understand the question.’ So, we’ve made a lot of progress, right, in a certain dimension. Google Translate is one example. Siri is another example. Wayz, is a really remarkable, direction-generating GPS (Global Positioning System) thing for helping you drive. They seem sort of smart. But as you point out, they are very narrowly smart. And they are not really smart. They are idiot savants. But one view says the glass is half full; we’ve made a lot of progress. And we should be optimistic about where we’ll head in the future. Is it just a matter of time?

Gary Marcus:

Um, I think it probably is a matter of time. It’s a question of whether are we talking decades or centuries. Kurzweil has talked about having AI in about 15 years from now. A true artificial intelligence. And that’s not going to happen. It might happen in the century. It might happen somewhere in between. I don’t think that it’s in principle an impossible problem. I don’t think that anybody in the AI community would argue that we are never going to get there. I think there have been some philosophers who have made that argument, but I don’t think that the philosophers have made that argument in a compelling way. I do think eventually we will have machines that have the flexibility of human intelligence. Going back to something else that you said, I don’t think it’s actually the case that goalposts are shifting as much as you might think. So, it is true that there is this old thing that whatever used to be called AI is now just called engineering, once we can do it.

________________________________

Russ Roberts:

Given all of that, why are people so obsessed right now–this week, almost, it feels like–with the threat of super AI, or real AI, or whatever you want to call it, the Musk, Hawking, Bostrom worries? We haven’t made any progress–much. We’re not anywhere close to understanding how the brain actually works. We are not close to creating a machine that can think, that can learn, that can improve itself–which is what everybody’s worried about or excited about, depending on their perspective, and we’ll talk about that in a minute. But, why do you think there’s this sudden uptick, spike in focusing on the potential and threat of it right now?

Gary Marcus:

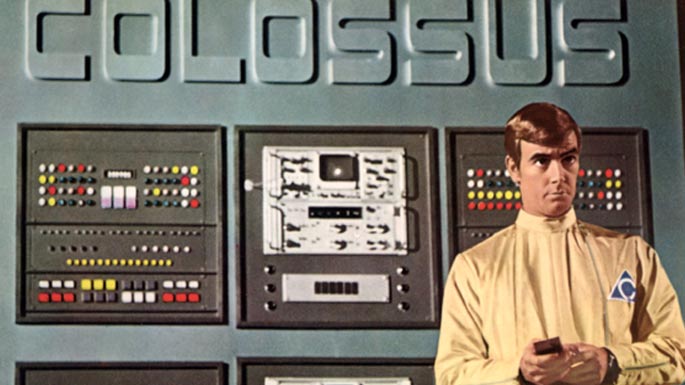

Well, I don’t have a full explanation for why people are worried now. I actually think we should be worried. I don’t understand exactly why there was such a shift in the public view. So, I wanted to write about this for The New Yorker a couple of years ago, and my editor thought, ‘Don’t write this. You have this reputation as this sober scientist who understands where things are. This is going to sound like Science Fiction. It will not be good for your reputation.’ And I said, ‘Well, I think it’s really important and I’d like to write about it anyway.’ We had some back and forth, and I was able to write some about it–not as much as I wanted. And now, yeah, everybody is talking about it. I don’t know if it’s because Bostrom’s book is coming out or because people, there’s been a bunch of hyping, AI stories make AI seem closer than it is, so it’s more salient to people. I’m not actually sure what the explanation is. All that said, here’s why I think we should still be worried about it. If you talk to people in the field I think they’ll actually agree with me that nothing too exciting is going to happen in the next decade. There will be progress and so forth and we’re all looking forward to the progress. But nobody thinks that 10 years from now we’re going to have a machine like HAL in 2001. However, nobody really knows downstream how to control the machines. So, the more autonomy that machines have, the more dangerous they are. So, if I have an Angry Birds App on my phone, I’m not hooked up to the Internet, the worst that’s going to happen if there’s some coding error maybe the phone crashes. Not a big deal. But if I hook up a program to the stock market, it might lose me a couple hundred million dollars very quickly–if I had enough invested in the market, which I don’t. But some company did in fact lose a hundred million dollars in a few minutes a couple of years ago, because a program with a bug that is hooked up and empowered can do a lot of harm. I mean, in that case it’s only economic harm; and [?] maybe the company went out of business–I forget. But nobody died. But then you raise things another level: If machines can control the trains–which they can–and so forth, then machines that either deliberately or unintentionally or maybe we don’t even want to talk about intentions: if they cause damage, can cause real damage. And I think it’s a reasonable expectation that machines will be assigned more and more control over things. And they will be able to do more and more sophisticated things over time. And right now, we don’t even have a theory about how to regulate that. Now, anybody can build any kind of computer program they want. There’s very little regulation. There’s some, but very little regulation. It’s kind of, in little ways, like the Wild West. And nobody has a theory about what would be better. So, what worries me is that there is at least potential risk. I’m not sure it’s as bad as like, Hawking, said. Hawking seemed to think like it’s like night follows day: They are going to get smarter than us; they’re not going to have any room for us; bye-bye humanity. And I don’t think it’s as simple as that. The world being machines eventually that are smarter than us, I take that for granted. But they may not care about us, they might not wish to do us harm–you know, computers have gotten smarter and smarter but they haven’t shown any interest in our property, for example, our health, or whatever. So far, computers have been indifferent to us.•